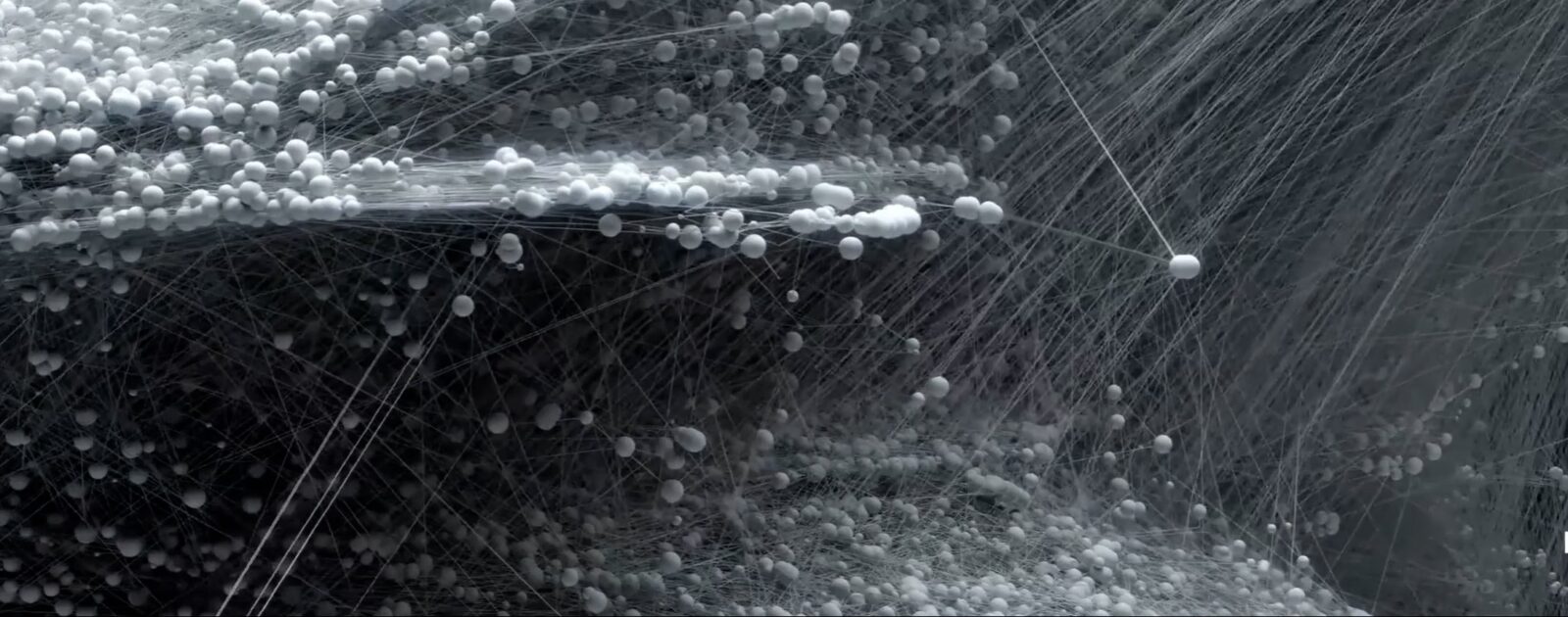

Orchestrating Emergent Storytelling with Embodied Multi-Agent Systems

Embodied LLM agents with memory, coordination, and spatial awareness designed to produce stable emergent narrative systems in interactive worlds.

Publications

Selected publications spanning generative systems, audiovisual cognition, machine learning for sound and image, and applied technical research.

Embodied LLM agents with memory, coordination, and spatial awareness designed to produce stable emergent narrative systems in interactive worlds.

An interactive 3D segmentation workflow for lung tumors that keeps clinicians in the loop while improving speed and boundary quality.

A study in robustness that combines normalization, lung isolation, self-supervision, loss tuning, and test-time augmentation for harder segmentation transfers.

An eye-tracking grounded contribution to the screen-time debate, focused on how infants actually engage with educational video.

Research on using deep networks and search techniques to automatically set synthesizer parameters that match a target sound.

Audio style transfer work that operates directly on the waveform, reducing reliance on phase reconstruction and moving closer to real-time stylization.

A methodology for deploying interactive audio and machine learning tools to the browser without rewriting C++ systems from scratch.

A collaborative project on how sound and image cues interact in audiovisual attention, including datasets and analysis tools for gaze behavior.

An fMRI study of how the brain encodes musical scales that are heard versus imagined, comparing absolute and relative pitch strategies.

A study of how media editing and audiovisual clarity can shape infant attention and move viewing behavior toward adult-like patterns.

A thesis connecting perception research and computational art through sound-image synthesis and models of audiovisual representation.

An augmented reality artwork that recomposes what participants hear and see in real time using salient audiovisual fragments.

A paper showing how video synchronizes viewer attention while task still reshapes where people look in both static and moving scenes.

An investigation into how motion, contrast, and edge information contribute to gaze allocation in dynamic scenes.

A real-time stylization method that reconstructs photos and video from a learned image corpus to create painterly and memory-like visual substitutions.

A 3D interface for exploring large music archives with weak metadata, using latent audio features to reveal semantic relationships.

A study showing that viewers shift attention across the eyes, mouth, and nose depending on which facial region is most informative.

A paper showing that motion and flicker are strong predictors of where viewers look in dynamic scenes.

A bridge paper between low-level attention models and higher-order scene understanding, focused on how task and meaning shape gaze in moving images.

A study of whether socially meaningful motion gets priority in visual attention during naturalistic moving scenes.